llms.txt Guide: The New Standard for AI Discoverability (2026)

If robots.txt tells AI crawlers where they can go on your website, llms.txt tells them what they will find. This new web standard is the next big step in AI SEO. It was proposed by llmstxt.org, and it is quickly becoming the standard way for websites to communicate with AI systems.

Right now, less than 0.3% of websites have an llms.txt file. This means there is a huge opportunity for early adopters. By adding llms.txt to your website, you can improve your AI Visibility Score by up to 35 points and help AI tools understand your website much better.

In this guide, you will learn what llms.txt is, why it matters, how to create one, what to put in it, and how it works together with robots.txt. We will also walk through real examples so you can see exactly what a good llms.txt file looks like.

Adding llms.txt = +20 points. Adding llms-full.txt = +15 points. Total possible boost: +35 points on your AI Visibility Score.

What is llms.txt?

llms.txt is a plain text file that you put at the root of your website. When an AI system visits your site, it looks for this file at yourdomain.com/llms.txt. The file gives the AI system a quick, structured summary of what your website is about.

Think of llms.txt as a business card for AI systems. Just like a business card tells a person your name, job, and contact information, llms.txt tells AI systems your website name, what it does, and where to find the most important pages.

The name "llms.txt" stands for "Large Language Models text file." It is designed specifically for AI systems (also called large language models or LLMs) like ChatGPT, Claude, Gemini, and Perplexity. These systems can read your llms.txt file and use the information to better understand your website, which leads to more accurate mentions and citations.

Let us compare llms.txt to the other important files on your website to make the differences clear:

| File | Purpose | Who Uses It | What It Controls |

|---|---|---|---|

| robots.txt | Access control | All crawlers | Which bots can visit which pages |

| sitemap.xml | Page discovery | Search engines, AI crawlers | Which pages exist and when they were updated |

| llms.txt | AI context | AI systems (LLMs) | What your site is about and which pages matter most |

| llms-full.txt | Detailed AI context | AI systems (LLMs) | Comprehensive information about your site content |

Why llms.txt Matters for Your Website

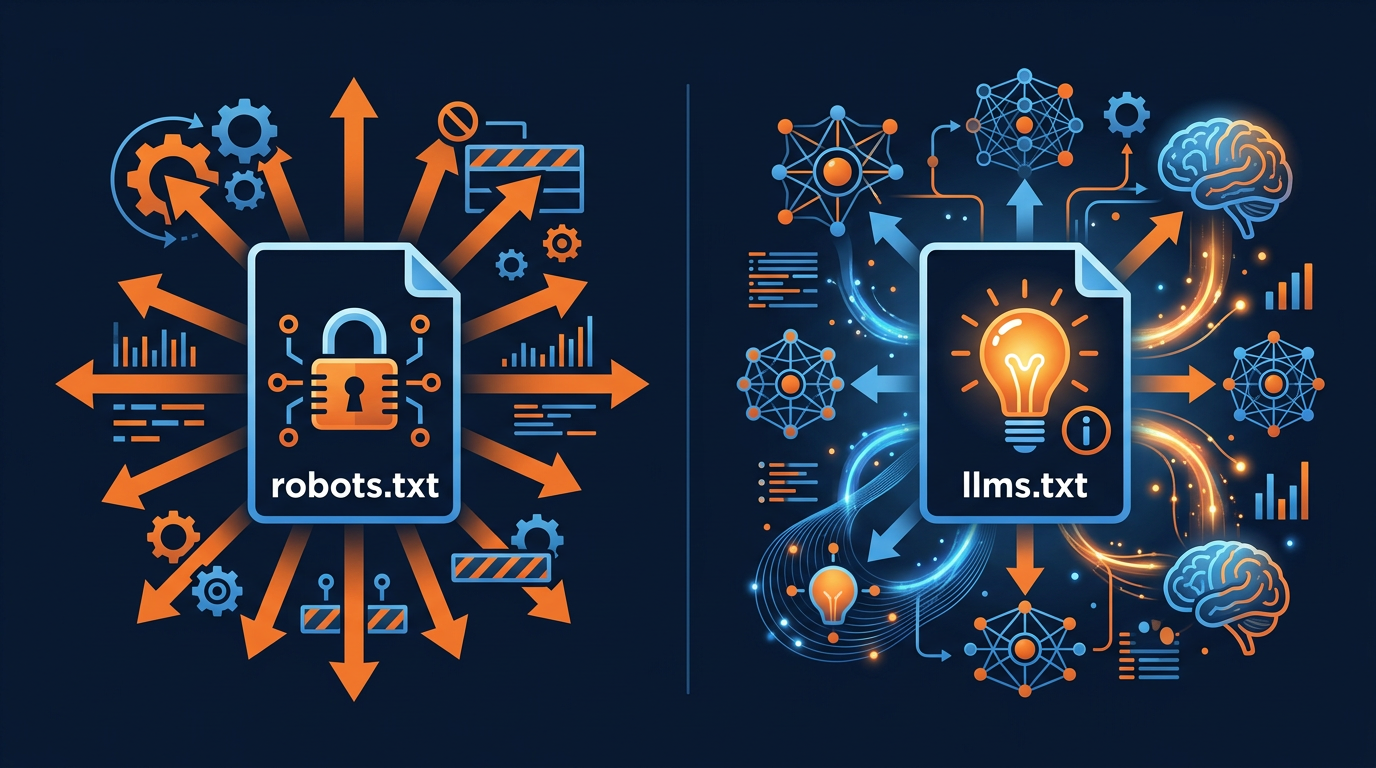

You might be wondering: "Why do I need another file on my website? Is not robots.txt enough?" The answer is that robots.txt and llms.txt do very different jobs. robots.txt controls access (which bots can visit). llms.txt provides context (what your site is about). You need both for the best AI visibility.

Here are five reasons why llms.txt matters:

1. AI Systems Understand Your Site Better

Without llms.txt, an AI system has to crawl many pages on your website to figure out what it is about. With llms.txt, the AI system gets a clear summary right away. This leads to more accurate mentions, better citations, and more relevant answers when users ask about topics related to your website.

2. Your AI Visibility Score Goes Up

Adding llms.txt gives you 20 points on your AI Visibility Score. Adding llms-full.txt gives you 15 more. That is 35 points total, which can move you from a "Fair" rating to "Excellent." No other single change has this big an impact on your AI score.

3. You Get an Early-Mover Advantage

Less than 0.3% of websites have llms.txt. By adding it now, you are ahead of 99.7% of websites. As AI search continues to grow and more AI systems start using llms.txt, the websites that adopted it early will have the strongest AI visibility.

4. Better Citations and Links from AI Tools

When an AI system understands your website well, it is more likely to cite your pages correctly and link to them in its answers. llms.txt helps AI systems know which pages to link to for specific topics, which can drive more traffic to your most important pages.

5. Easy to Set Up (Takes Less Than 15 Minutes)

Creating an llms.txt file is simple. You do not need any technical skills or special tools. It is just a plain text file. Follow the step-by-step guide below and you will have yours ready in less than 15 minutes.

llms.txt Format and Structure

The llms.txt file uses a Markdown-like format with specific sections. It is designed to be both human-readable and machine-readable. Here is the basic structure:

Let us break down each section:

- Title (# Your Name) is the name of your website or organization. Use a Markdown H1 heading (the # symbol).

- Description (> text) is a short summary of what your site does. Use blockquote format (the > symbol). Keep it to 1-3 sentences.

- Key Pages (## Key Pages) lists your most important pages with links and short descriptions. Use Markdown link format: [Name](URL): Description.

- Topics (## Topics) lists the main subjects your website covers. This helps AI systems categorize your content.

- Contact (## Contact) provides ways to reach you. This is optional but recommended.

Real-World Example: AI Crawler Check's llms.txt

Let us look at a real example. Here is what AI Crawler Check's own llms.txt file looks like. You can view it at aicrawlercheck.com/llms.txt:

Notice how clear and organized this file is. An AI system can read it in less than a second and immediately understand what the website does, what tools it offers, what blog content it has, and what topics it covers. This is much faster and more accurate than crawling dozens of pages to figure out the same information.

llms-full.txt: The Detailed Version

While llms.txt is a short summary (usually 20 to 100 lines), llms-full.txt is the full version. It provides much more detail about your website, including full page descriptions, FAQs, product information, your company story, and anything else that helps AI systems deeply understand your site.

Here is a simple example of what llms-full.txt might include:

The llms-full.txt file can be as long as you need. Some websites have files that are hundreds of lines long, covering every product, every service, and every important page on the site. The more useful information you include, the better AI systems can understand and represent your website.

Adding llms-full.txt gives you 15 more points on your AI Visibility Score, for a total of 35 points when combined with llms.txt.

Step-by-Step: How to Create Your llms.txt

Creating an llms.txt file is simple. Follow these steps and you will be done in less than 15 minutes:

Open a Text Editor

Open any plain text editor (Notepad on Windows, TextEdit on Mac, VS Code, or any other editor). Create a new file. The important thing is that it must be a plain text file, not a Word document or rich text file. The file should use UTF-8 encoding, which is the default for most text editors.

Add Your Site Name

On the first line, type your website name with a hash symbol in front of it: # Your Website Name. This is a Markdown heading. Use your brand name or the name visitors know you by.

Write a Brief Description

Below the title, add a short description of your website using the blockquote format (start each line with > ). Keep it to 1 to 3 sentences. Explain what your website does, who it is for, and what makes it useful. Be clear and specific.

List Your Key Pages

Create a section called ## Key Pages and list your 5 to 15 most important pages. Use the format: - [Page Name](URL): Description. Include your homepage, main product pages, important blog posts, and any tools or resources. Always use full, absolute URLs (including https://).

Add Your Topics

Create a section called ## Topics and list the main subjects your website covers. Use simple bullet points. This helps AI systems categorize your content and match it to relevant user questions. Include 5 to 10 topics that best describe your expertise.

Save as llms.txt

Save the file with the exact name llms.txt (all lowercase). Make sure there is no extra file extension like .txt.txt. The file should be plain text format.

Upload to Your Website Root

Upload the file to the root directory of your website, the same place where your robots.txt file lives. The file should be accessible at yourdomain.com/llms.txt. Test it by visiting this URL in your browser. You should see the plain text content.

Verify with AI Crawler Check

Go to AI Crawler Check and scan your website. The tool will detect your llms.txt file and add 20 points to your AI Visibility Score. If you also created an llms-full.txt file, that adds 15 more points. Your total score should now be significantly higher.

Best Practices for llms.txt

To get the most out of your llms.txt file, follow these best practices:

Keep llms.txt short and focused. AI systems prefer clear, structured summaries. Do not make your llms.txt hundreds of lines long. Save detailed information for llms-full.txt instead.

Use absolute URLs for all links. Always include the full URL with https://. Do not use relative paths like /page-name. AI systems need the complete URL to find your pages.

Update when your site changes. If you add new products, new pages, or change your site structure, update your llms.txt to reflect these changes. A current file is more useful than an old one.

Use plain text format only. No HTML, no rich text, no Word documents. The Content-Type header should be text/plain. Most web servers handle this automatically for .txt files.

Make descriptions specific. Instead of "Our blog has useful articles," say "Our blog covers AI crawler blocking, robots.txt optimization, and AI SEO strategies with step-by-step guides." The more specific, the better.

Combine with robots.txt optimization. llms.txt works best when your robots.txt is also optimized for AI crawlers. Having a great llms.txt file but blocking all AI bots in robots.txt does not help much.

List your most valuable pages first. AI systems may give more weight to the pages listed near the top of your llms.txt. Put your homepage, main products, and most important content first.

How llms.txt and robots.txt Work Together

It is important to understand that llms.txt and robots.txt are partners, not replacements. They do different jobs and you need both for the best AI visibility. Here is how they work together:

robots.txt = Access Control

robots.txt tells bots: "You can visit these pages. You cannot visit those pages." It is like a security guard at the door. It controls who gets in and where they can go. Learn more in our AI crawler blocking guide.

llms.txt = Context Provider

llms.txt tells AI systems: "Here is what our website is about. These are our most important pages. These are the topics we cover." It is like a welcome guide that helps visitors understand your building.

The best setup combines both files. Your robots.txt allows AI crawlers to access your content (at least the search-facing bots). Your llms.txt tells those crawlers what your content is about and where to find the best information. Together, they maximize your chances of being mentioned and linked in AI search results.

For the best results, also make sure your sitemap.xml is up to date. AI crawlers use sitemaps to discover all your pages efficiently. The three files working together (robots.txt for access, sitemap.xml for discovery, llms.txt for context) give you the best AI visibility possible.

Real-World Examples of llms.txt in Action

To help you understand the real impact of llms.txt, let us look at how different types of websites have used it successfully. These examples show that llms.txt works for all kinds of sites, from small blogs to large enterprise platforms.

A marketing agency based in Austin, Texas added llms.txt and llms-full.txt to their website in January 2026. Before adding these files, their site had an AI Visibility Score of 45. After adding well-structured llms.txt files with clear descriptions of their services, case studies, and blog content, their score jumped to 80. Within six weeks, they noticed a 23% increase in traffic from AI referral sources. Users were finding them through ChatGPT, Perplexity, and Google AI Overviews when asking for marketing agency recommendations.

An online learning platform used llms.txt to organize their course catalog for AI systems. They listed each course category with a brief description and linked to the main course pages. They also included their instructor profiles and their most popular free resources. This helped AI assistants give accurate recommendations when users asked questions like "Where can I learn data science online?" The platform saw a 35% increase in course enrollments from AI-referred visitors.

A small e-commerce store selling handmade candles added a simple llms.txt file with just 15 lines. It listed their product categories (soy candles, beeswax candles, candle accessories), their best-selling collections, and their shipping and return policies. This small change helped AI assistants recommend their products when users asked for handmade candle gifts. The store owner reported that AI referral traffic became their third-largest traffic source within two months.

A developer documentation site is another great example. They structured their llms.txt to mirror their documentation hierarchy: getting started guides, API references, tutorials, and changelog. Because their llms.txt was so well organized, AI coding assistants could direct developers to the exact documentation page they needed. This reduced their support ticket volume by 15% as developers found answers faster through AI tools.

These examples all share one common theme: the websites that benefit most from llms.txt are the ones that take time to organize their content clearly. A messy llms.txt file is better than no file at all, but a well-structured file delivers much better results. Think about what questions users might ask an AI about your industry, and make sure your llms.txt helps AI systems find those answers on your site.

Summary and Next Steps

llms.txt is one of the easiest and most impactful things you can do for your website's AI visibility. Here are the key takeaways:

llms.txt is a plain text file that tells AI systems what your website is about

It adds up to 35 points to your AI Visibility Score (20 for llms.txt + 15 for llms-full.txt)

Less than 0.3% of websites have adopted it, giving early movers a big advantage

It works alongside robots.txt and sitemap.xml, not as a replacement

Creating one takes less than 15 minutes with the step-by-step guide above

Use absolute URLs, keep it organized, and update it when your site changes

Your next steps:

Check your current AI Visibility Score to see if you already have llms.txt

Create your llms.txt file using the step-by-step guide and examples above

Optimize your robots.txt to allow AI crawlers

Re-scan your site to verify the improvement in your score

Check if Your Site Has llms.txt

Scan your site to verify llms.txt and llms-full.txt detection and see your AI Visibility Score

Free AI Bot CheckFrequently Asked Questions

What is llms.txt?

What is the difference between llms.txt and llms-full.txt?

Does llms.txt replace robots.txt?

How many websites have llms.txt?

Do AI systems actually use llms.txt?

How long should my llms.txt file be?

Related Articles

AI Visibility Score: Why Your Website Needs One in 2026

The AI Visibility Score measures how discoverable your website is to AI systems. Learn how it works, why it matters, and how to improve yours from 0 to 100.

Robots.txt Best Practices for AI SEO in 2026

The complete guide to robots.txt configuration for AI SEO. Learn how to balance AI visibility, content protection, and search engine access for maximum organic traffic in 2026.

How to Block AI Crawlers with Robots.txt (2026 Complete Guide)

A step-by-step guide to blocking (or allowing) AI crawlers like GPTBot, ClaudeBot, and Google-Extended using robots.txt. Includes code examples, best practices, and tools.

Brian specializes in AI SEO and web crawler optimization. He built AI Crawler Check to help website owners navigate the rapidly evolving landscape of AI crawlers and search.

Check Your AI Visibility Now

Scan your website against 154+ bots and get your AI Visibility Score