How to Block AI Crawlers with Robots.txt (2026 Complete Guide)

AI crawlers from ChatGPT, Claude, Gemini, and Perplexity are visiting websites at a very fast rate in 2026. These bots collect your content for different reasons. Some use it to train AI models. Others use it to answer questions in real time. Whether you want to block them to protect your content or allow them for better AI visibility, your robots.txt file is the first line of control.

In this guide, you will learn exactly how to block or allow every major AI crawler. We will show you the exact code you need to add to your robots.txt file. We will also explain the trade-offs so you can make the best choice for your website. By the end, you will have a clear plan for your AI crawler strategy.

This guide is for website owners, SEO professionals, content creators, and anyone who wants to understand how AI bots interact with their website. You do not need any technical background to follow along. We will explain everything step by step.

Want to see which AI crawlers are currently blocked on your website? Run a free scan at AI Crawler Check. It checks 154+ bots in seconds and gives you an AI Visibility Score.

What Are AI Crawlers and Why Do They Matter?

AI crawlers are automated programs (also called bots) that visit websites and collect information. They work a lot like the bots that Google and Bing use to index web pages. But AI crawlers have a different goal. Instead of building a search index, they collect content that AI companies use to build and improve their language models.

In 2026, there are more than 150 known AI crawlers active on the web. Some of these bots are from big companies like OpenAI, Google, and Anthropic. Others come from smaller companies or research groups. Each bot has its own name, called a user-agent, and its own rules about what it collects.

AI crawlers matter because they directly affect two things. First, they decide whether your content is used to train AI models like ChatGPT and Claude. Second, they decide whether your content shows up in AI-powered search results. If you block all AI crawlers, your website becomes invisible to AI search tools. If you allow all of them, your content may be used for model training without your permission.

The good news is that you can control which bots access your site. The main tool for this is your robots.txt file. Let us start by understanding the two types of AI crawlers.

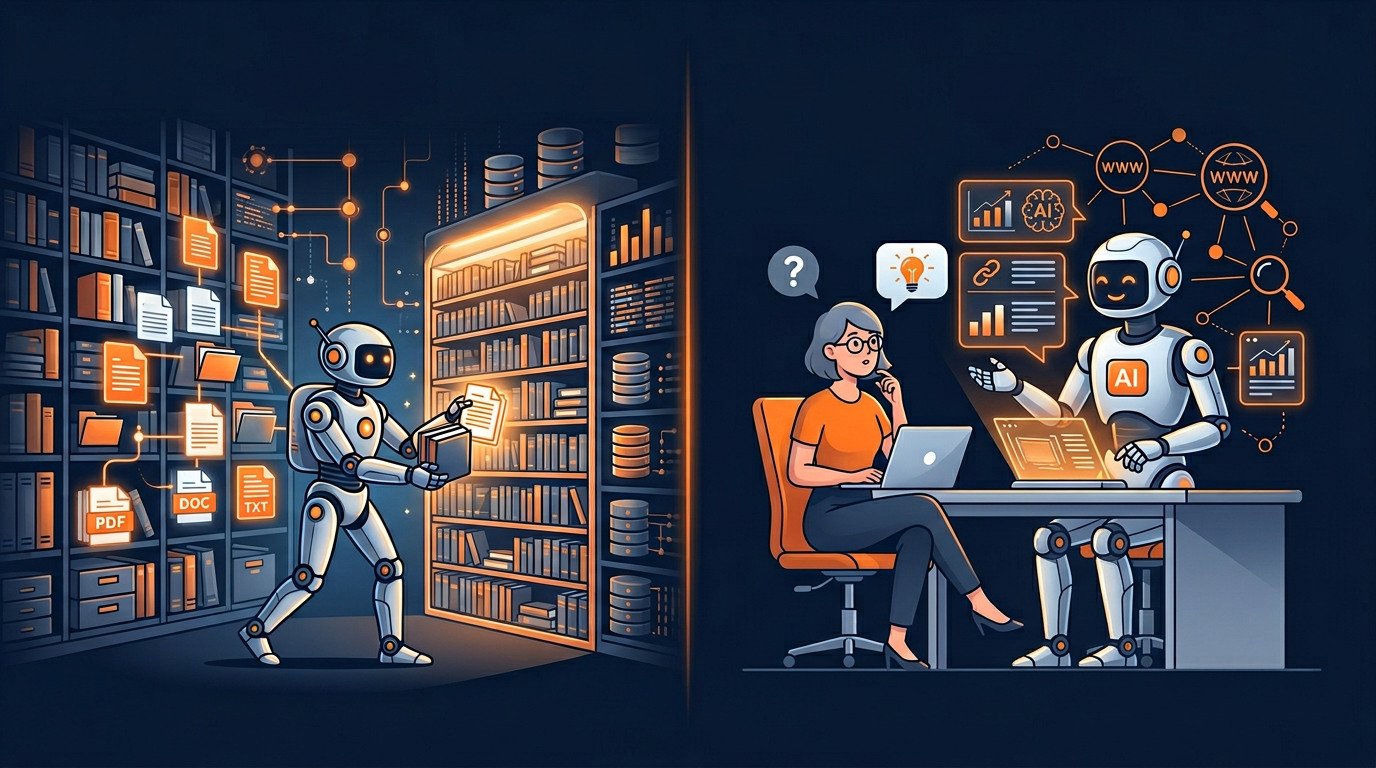

Two Types of AI Crawlers: Training vs. Search

Not all AI crawlers do the same thing. Understanding the difference between the two main types is important before you decide what to block. AI crawlers fall into two categories: training crawlers and search crawlers.

Training Crawlers

These bots collect content to train large language models. When a training crawler visits your website, it saves your text, images, and other content. Then it sends that data back to the AI company. The company uses your content to make their AI models better at writing, answering questions, and doing other tasks.

Examples: GPTBot (OpenAI), ClaudeBot (Anthropic), CCBot (Common Crawl), Google-Extended (Google AI training)

Search Crawlers

These bots visit your website in real time when a user asks a question to an AI tool. For example, when someone asks ChatGPT about a topic, the ChatGPT-User bot may visit your website right at that moment. It reads your page and uses the information to create an answer for the user. Your website may get credit with a link in the AI response.

Examples: ChatGPT-User (OpenAI Search), Perplexity-User (Perplexity Search), OAI-SearchBot, Claude-SearchBot

This difference is very important for your strategy. Many website owners want to block training crawlers (to protect their content from being used in AI models) but allow search crawlers (so their content shows up in AI search results and brings traffic). This is called selective blocking, and we will show you exactly how to do it later in this guide.

Some companies operate both types. For example, OpenAI has GPTBot for training and ChatGPT-User for search. These are separate bots with separate user-agent names. You can block one and allow the other. To learn more about OpenAI's crawlers, read our detailed guide on What is GPTBot.

The Tier 1 AI Crawlers You Must Know

Not all AI crawlers are equally important. We group them into tiers based on how much they affect your website's AI visibility. Tier 1 bots have the biggest impact on whether your content appears in AI-powered tools and search results. These are the bots you should focus on first.

Your decisions about these bots directly affect your AI Visibility Score. Here are the most important AI crawlers in 2026:

| Bot Name | Company | Type | User-Agent | Why It Matters |

|---|---|---|---|---|

| GPTBot | OpenAI | Training | GPTBot | Your content becomes part of future ChatGPT models |

| ChatGPT-User | OpenAI | Search | ChatGPT-User | Your content appears in ChatGPT search answers |

| ClaudeBot | Anthropic | Training | ClaudeBot | Your content used to train Claude AI models |

| Google-Extended | Training | Google-Extended | Controls your visibility in Google AI Overviews and Gemini | |

| PerplexityBot | Perplexity | Both | PerplexityBot | Used for both training and real-time search answers |

| OAI-SearchBot | OpenAI | Search | OAI-SearchBot | OpenAI's dedicated search indexing bot |

| Claude-SearchBot | Anthropic | Search | Claude-SearchBot | Anthropic's search crawler for Claude web search |

You can find the full list of all 154+ bots, including their user-agent strings, safety ratings, and blocking instructions, in our Bot Directory. For now, let us focus on the practical steps to control these bots.

What is robots.txt and How Does It Work?

Before we get into the blocking rules, let us make sure you understand what robots.txt is and how it works. The robots.txt file is a plain text file that sits at the root of your website. For example, if your website is example.com, your robots.txt file lives at example.com/robots.txt.

When a bot wants to visit your website, it first checks your robots.txt file. The file tells the bot which pages it can visit and which pages it cannot visit. Think of it like a set of rules posted at the door of a building. The rules say who can come in and where they can go.

The robots.txt file uses a simple format. Each rule has two parts: a User-agent line (which says which bot the rule is for) and a Disallow or Allow line (which says what the bot can or cannot do). Here is a basic example:

The / symbol means "everything on the website." So Disallow: / means "block everything" and Allow: / means "allow everything." You can also be more specific. For example, Disallow: /private/ would only block the /private/ folder.

There is one very important thing to know: robots.txt rules are based on trust. The file asks bots to follow the rules, but it does not force them. Most big companies like OpenAI, Google, and Anthropic say their bots follow robots.txt rules. But some smaller or unknown scrapers may ignore the rules. For these, you may need extra protection at the server level.

For a complete guide to robots.txt settings and optimization, read our Robots.txt Best Practices for AI SEO in 2026 article.

Option 1: Block All AI Crawlers

If you want to completely stop all AI crawlers from visiting your website, you need to add a rule for each major bot. You cannot just use one rule for all AI bots because they all have different user-agent names. Here is the full list you should add to your robots.txt file:

Warning: Blocking all AI crawlers means your content will NOT show up in ChatGPT, Claude, Gemini, Perplexity, or any other AI search tool. This can reduce your website's total traffic, because AI search is growing very fast. In 2026, AI search traffic is up more than 400% compared to the year before. Think carefully before you block everything.

Do not want to write robots.txt by hand? Use our free Robots.txt Generator with one-click presets for all 154+ bots. It takes less than a minute.

Option 2: Allow All AI Crawlers for Maximum Visibility

If your main goal is to get as much traffic as possible from AI tools, you should allow all AI crawlers. This gives you the best AI Visibility Score and means your content can show up in ChatGPT, Claude, Gemini, Perplexity, and other AI search results.

To allow all AI crawlers, make sure your robots.txt file does not have any Disallow rules for AI user-agents. You can also add explicit Allow rules to make your settings clear:

When you allow all AI crawlers, you get several benefits. First, your content can appear in AI search results from all major platforms. Second, your content may be cited and linked in AI-generated answers, which brings traffic back to your site. Third, if your content is in the training data, AI models may become more familiar with your brand and recommend it more often.

The main downside is that your content will be used for AI model training. This means AI companies will use your text, data, and other content to improve their systems. For some website owners, this is fine. For others, especially publishers and content creators who depend on their original content, this may not be acceptable.

For even better AI visibility, you should also create an llms.txt file. This file tells AI systems what your website is about and which pages are most important. It can add up to 35 points to your AI Visibility Score.

Option 3: Selective Blocking (The Smart Approach)

Most websites get the best results with a selective approach. This means you allow the search-facing AI bots (so your content shows up in AI answers) while blocking the training-only crawlers (so your content is not used to build AI models). This gives you the best of both worlds: AI search visibility without giving away your content for training.

Here is the recommended setup for selective blocking:

This approach works well for most types of websites. News websites, blogs, e-commerce stores, and corporate websites can all benefit from selective blocking. You keep your content out of training data while still getting traffic from AI search tools.

To create this setup without writing code by hand, use our Robots.txt Generator. It has a "Selective Blocking" preset that creates this exact setup with one click. You can also customize it for your specific needs.

Option 4: Partial Blocking (Block Specific Paths)

Sometimes you do not want to block a bot from your entire website. Instead, you want to let AI crawlers see some pages but not others. For example, you might want to let GPTBot read your blog posts (to get AI search traffic) but block it from your premium content or member-only pages.

You can do this with path-specific rules in robots.txt:

This approach is great for websites that have both free and paid content. You can share your free content with AI systems to get more visibility while keeping your paid content protected. Many news websites and online course platforms use this strategy.

Remember that the order of rules matters. In robots.txt, more specific rules usually win over less specific ones. So if you have Disallow: /premium/ and Allow: / for the same bot, the bot will be blocked from /premium/ but allowed everywhere else.

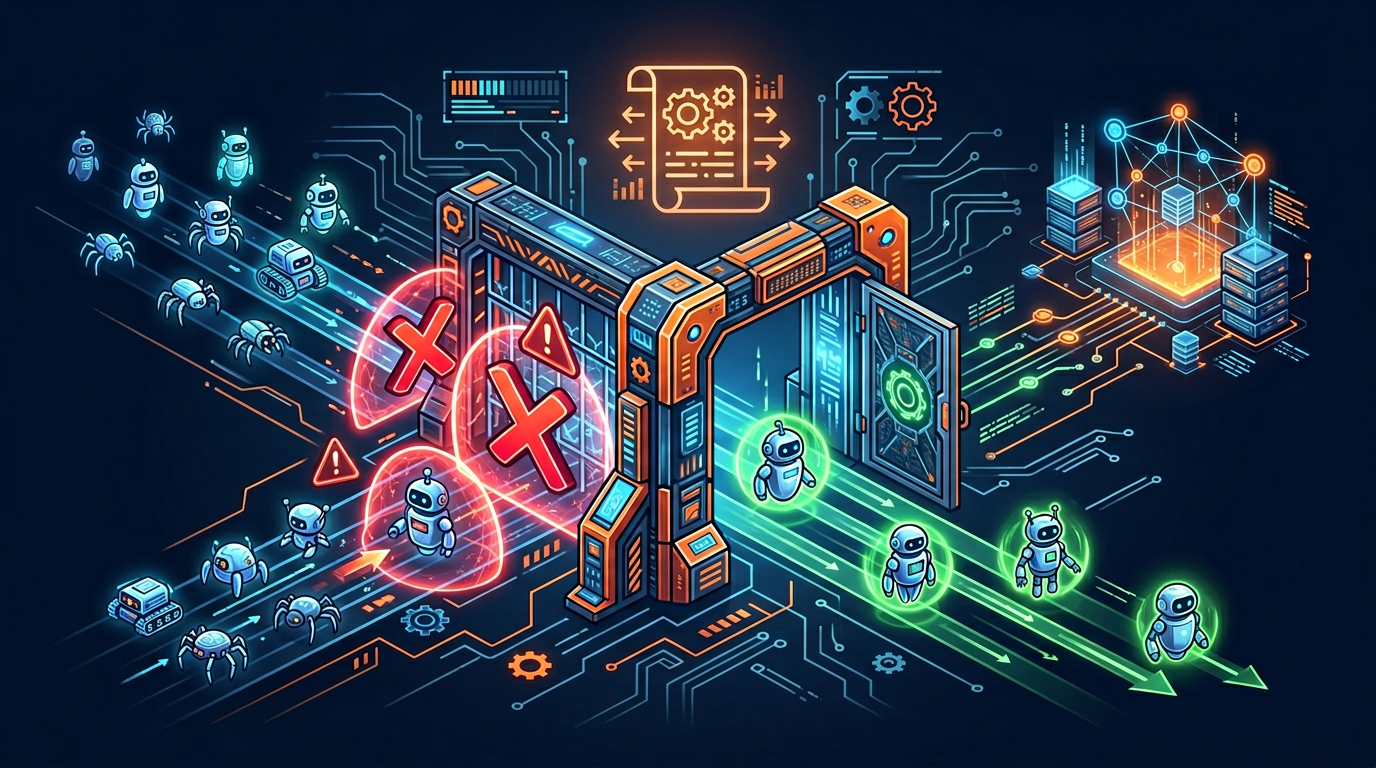

Going Beyond robots.txt: Extra Protection Methods

While robots.txt is the most common and easiest way to control AI crawlers, it has one big weakness: it is based on trust. A bot can choose to ignore your robots.txt rules. The major AI companies say they follow the rules, but you have no way to force every bot to follow them.

For stronger protection, you can use additional methods alongside your robots.txt file:

Nginx Server Block

If you use Nginx as your web server, you can add rules that block bots at the server level. This is more powerful than robots.txt because the server will refuse to send any content to the blocked bot. Here is an example:

Apache .htaccess Block

If you use Apache as your web server, you can add similar rules in your .htaccess file:

Meta Robot Tags

You can also add meta tags to individual HTML pages to tell AI crawlers not to use your content. This gives you page-level control:

X-Robots-Tag HTTP Header

For non-HTML content like PDF files or images, you can use the X-Robots-Tag HTTP header. This tells bots not to use these files for AI training:

For more details on all these methods, including how to set them up for specific bot categories, check the blocking instructions on any bot's page in our Bot Directory. Every bot profile page shows you the exact code for robots.txt, Nginx, Apache, meta tags, and HTTP headers.

Common Mistakes to Avoid

When setting up your robots.txt for AI crawlers, many website owners make mistakes that can hurt their SEO or leave their content unprotected. Here are the most common mistakes and how to avoid them:

Mistake 1: Using User-agent: * to Block AI Bots

Some people add User-agent: * Disallow: / and think it will block all AI crawlers. This is wrong because it also blocks Googlebot, Bingbot, and other search engines. Always use specific user-agent names for AI crawlers instead of the wildcard.

Mistake 2: Forgetting About New Crawlers

New AI crawlers appear every few months. If you only block the bots you know about today, new bots will be able to access your site tomorrow. Check your robots.txt at least every three months and use AI Crawler Check to scan for new bots.

Mistake 3: Blocking Search Crawlers by Accident

Be careful not to confuse AI training crawlers with AI search crawlers. If you block ChatGPT-User (search), your content will not appear in ChatGPT search results. If you only wanted to block GPTBot (training), you need to check that your rules are correct. Use our Robots.txt Validator to double-check.

Mistake 4: Not Testing After Making Changes

Always test your robots.txt after making changes. A small typo can break your rules. Use the Robots.txt Validator and then run a scan at AI Crawler Check to make sure everything works as expected.

Mistake 5: Blocking CSS, JavaScript, or Image Files

Never block CSS, JavaScript, or image files in your robots.txt. Both Google and AI crawlers need these files to understand your page properly. Only block page URLs, not asset files.

Step-by-Step: Set Up Your AI Crawler Rules

Now let us put everything together. Follow these steps to set up your AI crawler rules from start to finish:

Check Your Current Status

Go to AI Crawler Check and enter your website URL. The tool will scan your robots.txt and show you which of the 154+ bots are currently blocked, allowed, or not addressed. It also gives you an AI Visibility Score from 0 to 100.

Decide on Your Strategy

Choose one of the four options we covered: block all, allow all, selective blocking, or partial blocking. For most websites, we recommend selective blocking. This gives you AI search visibility while protecting your training data.

Generate Your robots.txt

Use our Robots.txt Generator to create the file. Select the bots you want to block or allow, and the tool will create the correct code for you. You can also use the preset buttons for common setups.

Upload Your robots.txt

Upload the file to the root of your website. It must be at yourdomain.com/robots.txt (not in a subfolder). If you use WordPress, you can edit it through the Yoast SEO or Rank Math plugin. If you use a static site, add the file to your root directory.

Validate Your Setup

Use the Robots.txt Validator to check for errors in your file. Then run another scan at AI Crawler Check to make sure the bots are blocked or allowed as you intended.

Add Extra Protection (Optional)

If you want stronger protection, add server-level blocks using Nginx or Apache rules (see the section above). You can also add meta robot tags to individual pages for more precise control.

Review Every Quarter

Set a reminder to check your robots.txt every 3 months. New AI crawlers appear regularly, and your strategy may need to change as AI search grows. Use the Batch Checker if you manage multiple websites.

AI Crawler Strategy by Website Type

Different types of websites need different AI crawler strategies. Here are our recommendations for the most common website types:

News and Media Websites

Recommendation: Selective blocking. Allow search bots (ChatGPT-User, OAI-SearchBot, Perplexity-User) to get traffic from AI search. Block training bots (GPTBot, ClaudeBot) to protect your original reporting. News content is very valuable for AI training, so protecting it makes sense.

E-commerce Stores

Recommendation: Allow most AI crawlers. AI search visibility can bring new customers to your products. Allow all Tier 1 bots for maximum visibility. Block aggressive scrapers (Bytespider, CCBot) that may steal product data for competitors. Consider partial blocking for pricing pages.

Blogs and Content Creators

Recommendation: Selective blocking or allow all. If your blog makes money from traffic, allow AI search bots to get more visitors. If you sell your content (courses, ebooks), block training bots to protect your paid material. Many bloggers choose to allow everything because AI search traffic is growing fast.

Corporate and SaaS Websites

Recommendation: Allow all Tier 1 bots. Corporate websites benefit a lot from AI visibility. When someone asks an AI tool about your industry, you want your company to be mentioned. Allow all major AI crawlers and add an llms.txt file for even better visibility.

Education and Government

Recommendation: Allow most crawlers for public information. Block training bots for protected research or student data. Government and education websites usually want their public information to be as easy to find as possible, including through AI tools.

The SEO Impact of Your AI Crawler Decisions

Let us be clear about one important fact: blocking AI crawlers does not affect your Google search ranking. Googlebot and AI crawlers are completely separate. You can block every AI crawler and your Google ranking will stay the same.

However, blocking AI crawlers does affect your overall traffic and visibility. In 2026, AI search is one of the fastest growing traffic sources on the internet. Here are some numbers to think about:

If you block all AI crawlers, you are missing out on a rapidly growing traffic source. If you allow all of them, you may be giving away your content for free. The best approach depends on your business model and goals. That is why selective blocking is so popular. It gives you the traffic benefits without the training data costs.

To understand exactly how your current settings affect your visibility, check your AI Visibility Score using our free tool. The score tells you how well your website is set up for AI access and where you can improve.

You can also learn about a new standard called llms.txt that works together with robots.txt. While robots.txt controls bot access, llms.txt tells AI systems what your website is about. Together, they give you the most control over your AI visibility.

Quick Summary and Next Steps

Here is a quick summary of everything we covered in this guide:

AI crawlers come in two types: training crawlers (collect data for AI models) and search crawlers (fetch data for real-time AI answers)

Your robots.txt file is the main tool to control which AI bots can access your website

You can block all, allow all, use selective blocking, or do partial path blocking

Selective blocking (allow search bots, block training bots) is the best option for most websites

Blocking AI crawlers does not affect your Google search ranking

For extra security, add server-level blocks with Nginx or Apache in addition to robots.txt

Review your settings every 3 months as new bots appear regularly

Now that you know how to control AI crawlers, here are your next steps:

Scan your website to see your current AI Visibility Score and which bots are blocked

Generate your robots.txt with our free tool using the strategy that fits your website

Read our robots.txt best practices guide for more advanced tips

Learn about llms.txt to boost your AI visibility even further

Check Your AI Crawler Access Now

See which of the 154+ bots are blocked or allowed on your website. Free, instant results.

Free AI Bot CheckFrequently Asked Questions

How do I block ChatGPT from crawling my website?

User-agent: GPTBot followed by Disallow: / to your robots.txt file. This blocks OpenAI's training crawler. To also block ChatGPT Search, add rules for ChatGPT-User and OAI-SearchBot. Use our Robots.txt Generator to create the correct setup.Does blocking AI crawlers affect my Google ranking?

Google-Extended will stop your content from showing up in Google AI Overviews and Gemini responses.Which AI crawlers should I block?

How do I verify if my robots.txt is blocking AI bots correctly?

Can I block some AI crawlers and allow others?

Do AI crawlers actually follow robots.txt rules?

Related Articles

Robots.txt Best Practices for AI SEO in 2026

The complete guide to robots.txt configuration for AI SEO. Learn how to balance AI visibility, content protection, and search engine access for maximum organic traffic in 2026.

What is GPTBot? OpenAI's Web Crawler Explained (2026)

Everything you need to know about GPTBot, OpenAI's web crawler for ChatGPT training. User-agent string, blocking rules, impact on SEO, and how it compares to other AI crawlers.

Google-Extended vs Googlebot: What Website Owners Need to Know (2026)

Learn the key differences between Google-Extended and Googlebot. Understand how each crawler affects your SEO, Google AI Overviews, and Gemini visibility in 2026.

Brian specializes in AI SEO and web crawler optimization. He built AI Crawler Check to help website owners navigate the rapidly evolving landscape of AI crawlers and search.

Check Your AI Visibility Now

Scan your website against 154+ bots and get your AI Visibility Score